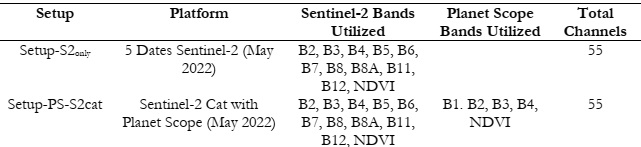

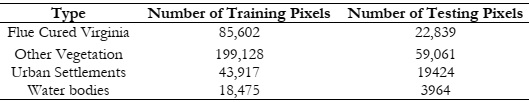

This section represents the impactful results obtained from our experiments conducted

on the datasets central to our study, listed in Table 1 Datasets are used with ConvLSTM and

their results are compared systematically. The collected GTD is split into training and testing

data with a ratio of 70% and 30% for training and testing data respectively (Table 2). The testing

data is completely unseen for the model and thus represents separate crop fields instead of

pixels. This measure is taken to ensure generalization in the model.

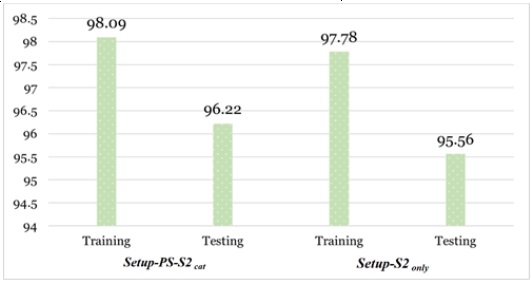

As illustrated in Figure 7 below, Setup-PS-S2cat outperforms Setup-S2only, attaining an

overall training and testing accuracy of 98.09% and 96.22% respectively. Looking at the training

and testing accuracy of Setup-S2only, it can be observed that 96.18% and 93.43% accuracies are

achieved respectively.

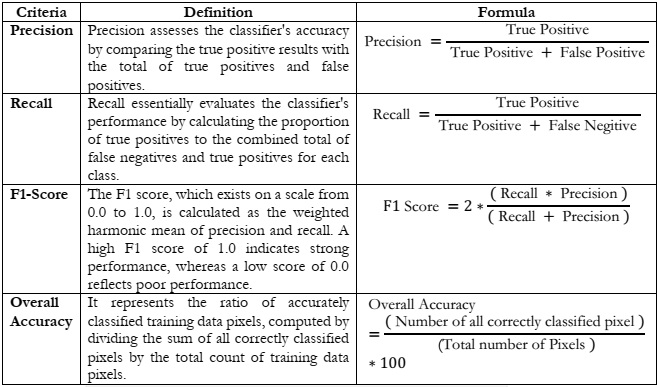

Classification evaluation criteria employed are listed in

Table 3.

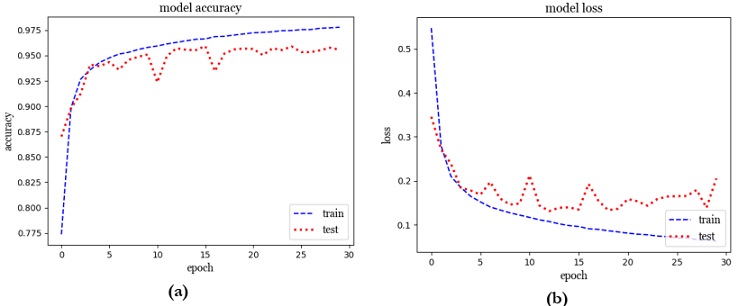

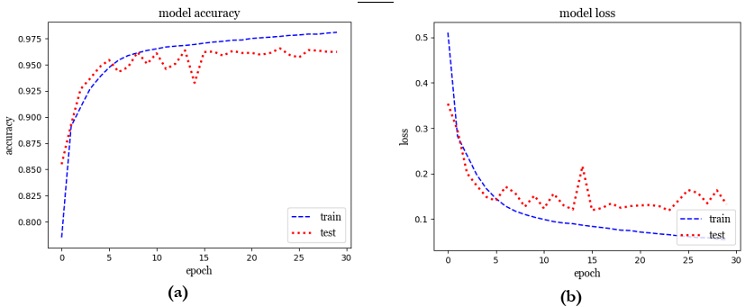

Throughout the 30 epochs of training depicted in Figure 9(a) and Figure 9(b), the model

demonstrated consistent improvement. It began with an initial loss of 0.5106 and an accuracy

of 78.50%, steadily progressing to an impressive accuracy of 98.09% by the end of the training

process. Concurrently, the loss steadily decreased from 0.5106 to 0.0541, highlighting the

model's improved performance. This progress extended to the validation set as well, where

accuracy increased from 85.49% to 96.22%. In tandem, the validation loss dropped significantly

from 0.3539 to 0.1325, indicating enhanced generalization. The model's training journey

showcased consistent enhancements in accuracy and reduced loss, affirming its effective

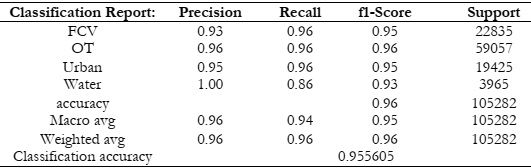

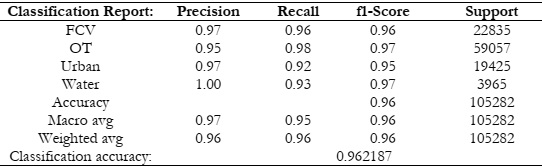

learning and robust performance. The classification report in Table assesses four classes: FCV,

OT, Urban, and Water. Notably, precision values are high, ranging from 0.95 to a perfect 1.00,

indicating accurate positive predictions. The model's recall values, ranging from 0.92 to 0.98,

show its ability to correctly identify actual instances. Balanced precision and recall result in

impressive F1-scores between 0.95 and 0.97, affirming overall effectiveness in classification. The

model's accuracy is strong at 0.96, indicating 96% correct classifications. The classification

accuracy value of 0.962187 underscores its reliability in predicting class labels.

In the comparison between Setup-S2only and Setup-PS-S2cat, especially concerning the

FCV class, it becomes evident that Setup-PS-S2cat boasts several advantages. First, Setup-PSS2cat exhibits a higher precision (0.97) compared to Setup-S2only (0.93), indicating its superior

ability to make accurate positive predictions for the FCV class. Additionally, both models share

the same recall (0.96), which means they correctly identified an equal proportion of actual FCV

instances. However, the strong point for Setup-PS-S2cat becomes more apparent when

considering the F1-score, where it achieves 0.96, indicating a better balance between precision

and recall. In contrast, Setup-S2 only has an F1-score of 0.95 for the FCV class. Setup-PS-S2cat

demonstrates stronger performance in accurately identifying and classifying instances of the

FCV class, as it achieves higher precision and a slightly better F1-score compared to Setup-S2only.

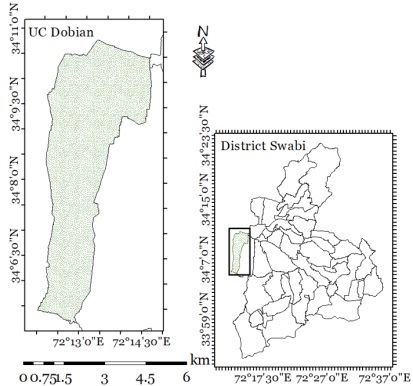

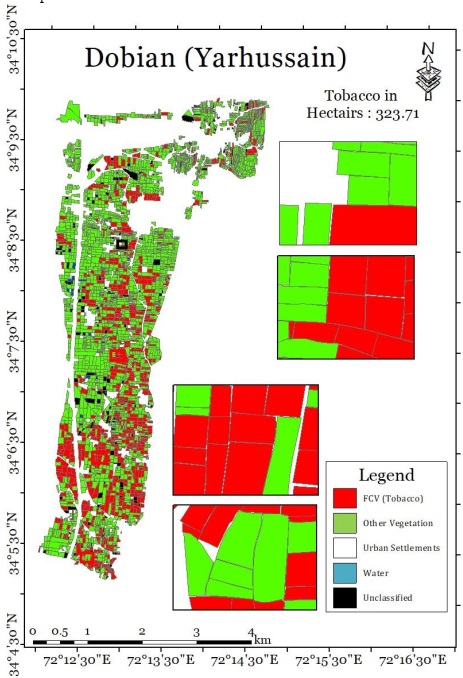

The classification map of Dobain (Yarhussain) is provided in Figure 11.

The effective utilization of multi-satellite data in our experimental setup lay out a plan

for accurate estimation of tobacco crop acreage in a vast geographical area. In addition, the usage

of temporal data from Sentinel-2 and Planet-Scope enhances the classification results with

efficiency. To ensure further validation of our classification results (depicted in Figure 11)

validation surveys have been carried out in the pilot region for pinpointing False positives and

True Negatives. The classification map provided in Figure 11 presents ConvLSTM results with

4 classes; Tobacco (Red), Other Vegetation (Green), Urban (White), and Water (Blue). It can be

seen from the figure that Tobacco plantations are clearly distinguishable from other classes. Our

findings from the validation surveys validate DL model results with the same accuracy as

presented in Table .

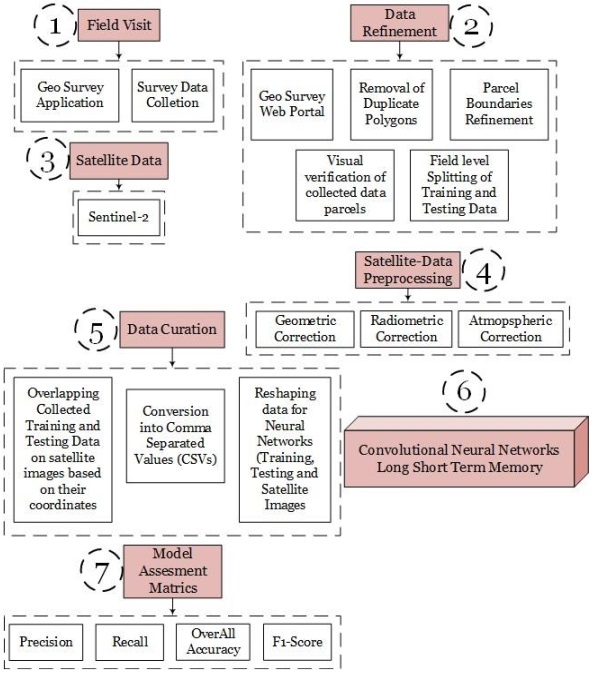

1. A brief overview of the modus operandi can be summarized in the following stages;

2. GTD is collected using the Geosurvey mobile application.

3. The data is split into training and testing with a 70/30 split ratio.

4. A synergy of Sentinel-2 and Planet-Scope is created to extract pixel information.

5. Temporal data of Sentinel-2 and Planet-Scope is taken for crop classification.

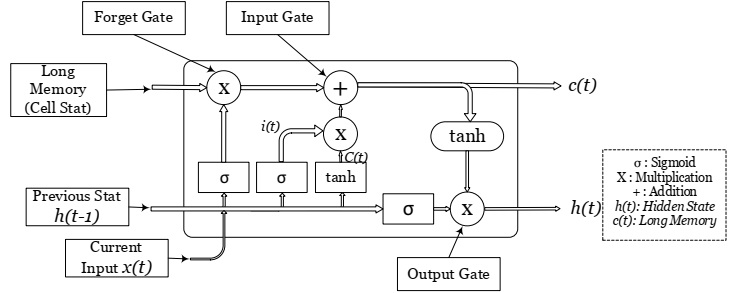

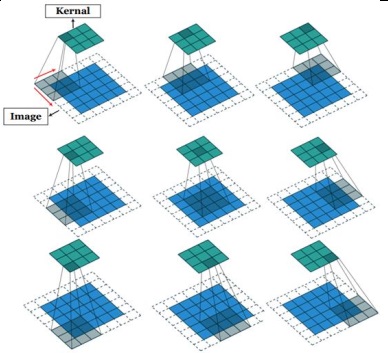

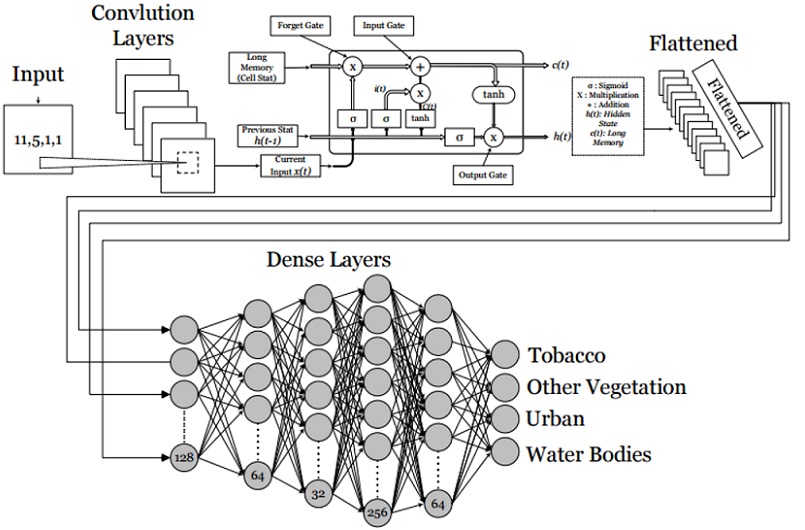

6. DLM-based ConvLSTM (A type of RNN) is developed.

7. During model training accuracy metrics are employed for measuring the performance of

ConvLSTM.

8. The trained model is used for crop map generation.

9. The generated crop map consists of Tobacco plantation with a testing accuracy of 96.2%

10. Ground truth validation survey is also performed in the region of interest to ensure

accurate classification results.

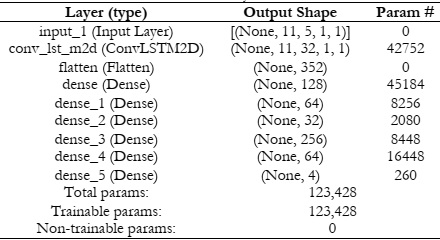

The summary or the formulation of the ConvLSTM model is given in Table .

The methodology is implemented in our developed land cover land use system

“Agriltytics” (Figure 10). More details about the system can be obtained from the website

ncbcpeshawar.com.

[1] R. T. Y. and R. Khalid, “Impact of tobacco generated income on Pakistan economy (a

case study of khyber Pakhtunkhwa),” J. Basic Appl. Sci. Res., vol. 7, no. 4, pp. 1–17,

2017.

[2] and M. A. I. M. Sabir, W. Saleem, “Estimating the Under-Reporting of Cigarette

Production in Pakistan,” 2022.

[3] W. K. et Al, “On the performance of temporal stacking and vegetation indices for

detection and estimation of tobacco crop,” IEEE Access, vol. 8, pp. 103020–103033,

2020.

[4] and H. A. J. C. Antenucci, K. Brown, P. L. Croswell, M. J. Kevany, “Geographic

Information Systems: a guide to the technology,” Springer, vol. 115, 1991.

[5] and P. J. P. Shanmugapriya, S. Rathika, T. Ramesh, “Applications of remote sensing in

agriculture-A Review,” Int. J. Curr. Microbiol. Appl. Sci, vol. 8, no. 1, pp. 2270–2283,

2019.

[6] V. Klemas, “Fisheries applications of remote sensing: An overview,” Fish. Res., vol. 148,

pp. 124–136, 2013.

[7] and G. A. D. Poli, F. Remondino, E. Angiuli, “Radiometric and geometric evaluation

of GeoEye-1, World View-2 and Pléiades-1A stereo images for 3D information

extraction,” ISPRS J. Photogramm. Remote Sens., pp. 35–47, 2015.

[8] M.-C. C. and C. Zhang, ““Formosat-2 for international societal benefits,” Remote Sens.,

pp. 1–7, 2016.

[9] M. Drusch et al., “Sentinel-2: ESA’s Optical High-Resolution Mission for GMES

Operational Services,” Remote Sens. Environ., vol. 120, pp. 25–36, May 2012, doi:

10.1016/J.RSE.2011.11.026.

[10] C. San Francisco, “Planet application program interface: In space for life on Earth,”

Team, P., 2017.

[11] C. Liao et al, “Synergistic use of multi-temporal RADARSAT-2 and VENμS data for

crop classification based on 1D convolutional neural network,” Remote Sens., vol. 12, no.

5, 2020.

[12] and Y. H. J. Zhang, Y. He, L. Yuan, P. Liu, X. Zhou, “Machine learning-based spectral

library for crop classification and status monitoring,” Agronomy, vol. 9, no. 9, 2019.

[13] G. B. and E. Scornet, “A random forest guided tour,” vol. 25, pp. 197–227, 2016.

[14] C.-C. C. and C.-J. Lin, “LIBSVM: a library for support vector machines,” ACM Trans.

Intell. Syst. Technol., vol. 2, no. 3, pp. 1–27, 2011.

[15] and H. K. X. Sun, J. Peng, Y. Shen, “Tobacco plant detection in RGB aerial images,”

Agriculture, vol. 10, no. 3, 2020.

[16] and M. S.-S. Y. Palchowdhuri, R. Valcarce-Diñeiro, P. King, “Classification of multitemporal spectral indices for crop type mapping: a case study in Coalville, UK,” J. Agric.

Sci., vol. 156, no. 1, pp. 24–36, 2018.

[17] and X. L. D. Li, Y. Ke, H. Gong, “Object-based urban tree species classification using

bi-temporal WorldView-2 and WorldView-3 images,” Remote Sens., vol. 7, no. 12, pp.

16917–16937, 2015.

[18] J. R. Jensen, “Biophysical remote sensing,” Ann. Assoc. Am. Geogr., vol. 73, no. 1, pp.

111–132, 1983.

[19] L. P. V. et Al., “Forecasting corn yield at the farm level in Brazil based on the FAO-66

approach and soil-adjusted vegetation index (SAVI),” Agric. Water Manag., vol. 225,

2019.

[20] and V. D. A. K. Verma, P. K. Garg, K. Hari Prasad, “CLASSIFICATION OF LISS IV

IMAGERY USING DECISION TREE METHODS.,”,” Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci., vol. 41, 2016.

[21] and E. D. G. Z. Fan, J. Lu, M. Gong, H. Xie, “Automatic tobacco plant detection in

UAV images via deep neural networks,” IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens.,

vol. 11, no. 3, pp. 876–887, 2018.

[22] Y. B. Y. LeCun, “Convolutional networks for images, speech, and time series,” Handb.

brain theory neural networks, vol. 3361, no. 10, 1995.

[23] and F. H. L. Wang, J. Zhang, P. Liu, K.-K. R. Choo, “Spectral–spatial multi-featurebased deep learning for hyperspectral remote sensing image classification,” Soft Comput.,

vol. 21, pp. 213–221, 2017.

[24] X. Z. et Al., “Deep learning in remote sensing: a review december,” 2017.

[25] and A. S. N. Kussul, M. Lavreniuk, S. Skakun, “Deep learning classification of land

cover and crop types using remote sensing data,” IEEE Geosci. Remote Sens. Lett., vol.

14, no. 5, pp. 778–782, 2017.

[26] and Y. D. S. Ji, C. Zhang, A. Xu, Y. Shi, “3D convolutional neural networks for crop

classification with multi-temporal remote sensing images,” Remote Sens., vol. 10, no. 1,

2018.

[27] S. Hochreiter and J. Schmidhuber, “Long Short-Term Memory,” Neural Comput., vol. 9,

no. 8, pp. 1735–1780, Nov. 1997, doi: 10.1162/NECO.1997.9.8.1735.

[28] and A. M. A. Graves, N. Jaitly, “Hybrid speech recognition with deep bidirectional

LSTM,” 2013 IEEE Work. Autom. speech Recognit. understanding, IEEE, pp. 273–278,

2013.

[29] L. M. and L. C. Jain, “Recurrent neural networks: design and applications.,” CRC Press,

1999.

[30] M. Boden, “A guide to recurrent neural networks and backpropagation,” Dallas Proj.,

2002.

[31] and F. S. T. M. Breuel, A. Ul-Hasan, M. A. Al-Azawi, “High-performance OCR for

printed English and Fraktur using LSTM networks,” 2013 12th Int. Conf. Doc. Anal.

recognition, IEEE, pp. 683–687, 2013.

[32] and J. Y. Z. Ding, R. Xia, J. Yu, X. Li, “Densely connected bidirectional lstm with

applications to sentence classification,” CCF Int. Conf. Nat. Lang. Process. Chinese Comput.

Springer, pp. 278–287, 2018.

[33] X. Z. Y. Chen, K. Zhong, J. Zhang, Q. Sun, “LSTM networks for mobile human

activity recognition,” Proc. 2016 Int. Conf. Artif. Intell. Technol. Appl. Bangkok, Thail., pp.

24–25, 2016.

[34] S. K. and R. Casper, “Applications of convolution in image processing with

MATLAB,” Univ. Washingt., pp. 1–20, 2013.

[35] and J. Z. Z. Li, F. Liu, W. Yang, S. Peng, “A survey of convolutional neural networks:

analysis, applications, and prospects,” IEEE Trans. neural networks Learn. Syst., 2021