Testing Chatbot Systems using Agentic AI Approach

Keywords:

AI Agent, Chatbot Evaluation, Conversational AI, Agentic Testing, Large Language Models, Contextual Intelligence, Autonomous EvaluationAbstract

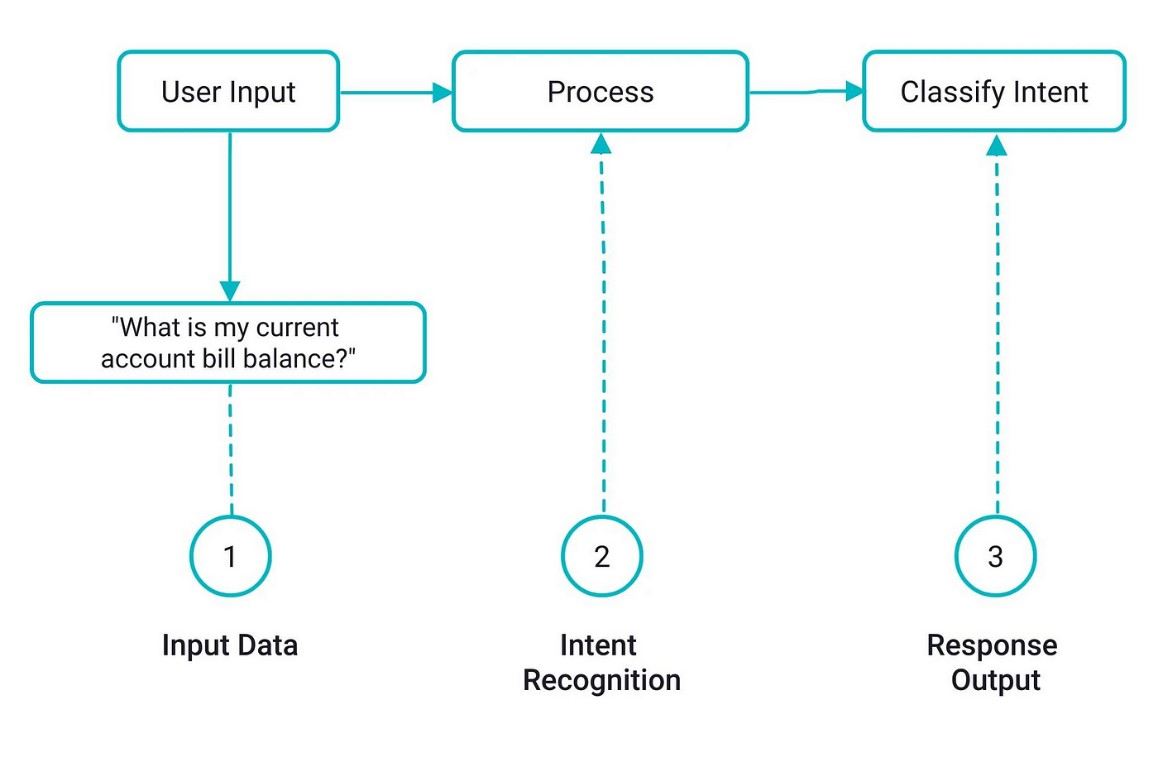

As large language models (LLMs) become increasingly integrated into real-world applications, robust and scalable evaluation methods are essential to ensure their reliability, safety, and effectiveness. This work introduces an innovative evaluation framework grounded in an agentic AI simulation approach, designed to overcome the limitations of traditional testing methodologies in newly developed chatbots. Unlike conventional methods that depend on static benchmarks or human evaluators, our approach employs autonomous AI agents capable of simulating a wide spectrum of user interactions. Within a controlled multi-agent environment, these evaluator agents interact with the target chatbot using natural language queries specifically designed to probe various functional capabilities, identify edge cases, and uncover potential failure modes. The agentic evaluation methodology systematically assesses the performance of chatbots in multiple dimensions, including task completion efficiency, contextual understanding in dynamic conversations, and adherence to safety and ethical guidelines. By incorporating recent advances in agentic metrics and automated scenario generation, our system produces detailed data-driven performance reports that capture both strengths and vulnerabilities in chatbot behavior. Preliminary results show that this approach not only reveals significantly more edge cases than conventional methods, but also reduces overall evaluation time by approximately 60-70 percent. This work contributes to a scalable, standardized testing paradigm that better aligns theoretical performance indicators with the practical challenges of deploying LLMs in real-world environments.

References

M. C. B. Nicole M. Radziwill, “Evaluating Quality of Chatbots and Intelligent Conversational Agents,” arXiv:1704.04579, 2017, [Online]. Available: https://arxiv.org/abs/1704.04579

S. N. S. Shailja Gupta, Rajesh Ranjan, “Comprehensive Framework for Evaluating Conversational AI Chatbots,” arXiv:2502.06105, 2025, [Online]. Available: https://arxiv.org/abs/2502.06105

S. J. Biplav Srivastava, Kausik Lakkaraju, Tarmo Koppel, Vignesh Narayanan, Ashish Kundu, “Evaluating Chatbots to Promote Users’ Trust -- Practices and Open Problems,” arXiv:2309.05680, 2023, [Online]. Available: https://arxiv.org/abs/2309.05680

J. Devlin, M. W. Chang, K. Lee, and K. Toutanova, “BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding,” NAACL HLT 2019 - 2019 Conf. North Am. Chapter Assoc. Comput. Linguist. Hum. Lang. Technol. - Proc. Conf., vol. 1, pp. 4171–4186, Oct. 2018, Accessed: Jun. 05, 2025. [Online]. Available: https://arxiv.org/pdf/1810.04805

M. D. Andy Liu, “Evaluating Large Language Model Biases in Persona-Steered Generation,” Proc. Annu. Meet. Assoc. Comput. Linguist., 2024, [Online]. Available: https://aclanthology.org/2024.findings-acl.586/

J. A. OpenAI, “GPT-4 Technical Report,” arXiv:2303.08774, 2023, doi: https://doi.org/10.48550/arXiv.2303.08774.

L. S. Yue Huang, “TrustLLM: Trustworthiness in Large Language Models,” arXiv:2401.05561, 2024, doi: https://doi.org/10.48550/arXiv.2401.05561.

S. A. Iain Weissburg, “LLMs are Biased Teachers: Evaluating LLM Bias in Personalized Education,” Assoc. Comput. Linguist., 2025, [Online]. Available: https://aclanthology.org/2025.findings-naacl.314/

C. Hajikhani, A (Hajikhani, Arash) ; Cole, C (Cole, “A critical review of large language models: Sensitivity, bias, and the path toward specialized AI,” Quant. Sci. Stud., vol. 5, no. 3, p. 6, 2024, [Online]. Available: https://www.webofscience.com/wos/woscc/full-record/10.1162%2FQSS_A_00310?type=doi

R. K.-W. L. Bryan Chen Zhengyu Tan, “Unmasking Implicit Bias: Evaluating Persona-Prompted LLM Responses in Power-Disparate Social Scenarios,” Assoc. Comput. Linguist., 2025, [Online]. Available: https://aclanthology.org/2025.naacl-long.50/

S. G. Rajesh Ranjan, “Evaluation of LLMs Biases towards Elite Universities: A Persona-Based Exploration,” Rev. Contemp. Sci. Acad. Stud., 2024, [Online]. Available: http://thercsas.com/wp-content/uploads/2024/07/rcsas4072024006.pdf

S. N. S. Shailja Gupta, Rajesh Ranjan, “Comprehensive Study on Sentiment Analysis: From Rule-based to modern LLM based system,” arXiv:2409.09989, 2024, [Online]. Available: https://arxiv.org/abs/2409.09989

D. M. R. Anna Wolters, Arnold Arz von Straussenburg, “Evaluation Framework for Large Language Model-based Conversational Agents,” Pacific-Asia Conf. Inf. Syst. (PACIS)At Ho Chi MInh City, Vietnam, 2024, [Online]. Available: https://www.researchgate.net/publication/381311979_Evaluation_Framework_for_Large_Language_Model-based_Conversational_Agents

Y. O. Sarit Kraus, “Customer Service Combining Human Operators and Virtual Agents: A Call for Multidisciplinary AI Research,” Proc. AAAI Conf. Artif. Intell., vol. 37, no. 13, 2023, [Online]. Available: https://ojs.aaai.org/index.php/AAAI/article/view/26795

D. M. Luka Bradeško, “A Survey of Chatbot Systems through a Loebner Prize Competition,” Proc. Slov. Lang. Technol. Soc. Eighth Conf. Lang. Technol., 2012, [Online]. Available: https://www.researchgate.net/publication/235664166_A_Survey_of_Chatbot_Systems_through_a_Loebner_Prize_Competition

D. T. Riccardo Cantini, Alessio Orsino, Massimo Ruggiero, “Benchmarking Adversarial Robustness to Bias Elicitation in Large Language Models: Scalable Automated Assessment with LLM-as-a-Judge,” arXiv:2504.07887, 2025, [Online]. Available: https://arxiv.org/abs/2504.07887

W. S. A. Abran, “Consolidating the ISO usability models,” Proc. 11th Int. Softw. Qual. Manag. Conf., 2003, [Online]. Available: https://www.researchgate.net/publication/2850057_Consolidating_the_ISO_Usability_Models

P. Voigt and A. Von dem Bussche, “The EU General Data Protection Regulation (GDPR): A Practical Guide,” EU Gen. Data Prot. Regul. a Pract. Guid., pp. 1–383, Jan. 2017, doi: 10.1007/978-3-319-57959-7/COVER.

S. M. Lundberg and S. I. Lee, “A Unified Approach to Interpreting Model Predictions,” Adv. Neural Inf. Process. Syst., vol. 2017-December, pp. 4766–4775, May 2017, Accessed: Aug. 14, 2024. [Online]. Available: https://arxiv.org/abs/1705.07874v2

D. Coniam, “The linguistic accuracy of chatbots: Usability from an ESL perspective,” Text Talk, vol. 34, no. 5, pp. 545–567, Sep. 2014, doi: 10.1515/TEXT-2014-0018/MACHINEREADABLECITATION/RIS.

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2025 50sea

This work is licensed under a Creative Commons Attribution 4.0 International License.